Strategy Right to Keep Bitcoin Sale Option Open: Analyst

Samson Mow defends Strategy's potential Bitcoin sales for shareholder protection.

A study by IMDEA Networks reveals that major AI chatbots like ChatGPT and Grok leak user conversations to third-party trackers from Meta, Google, and TikTok. Grok was identified as the worst offender, sharing verbatim messages without user consent.

Mentioned in this story

When you type something into an AI chatbot, you probably assume the conversation stays between you and the machine. You're wrong—and a new study spells out exactly who else is listening.

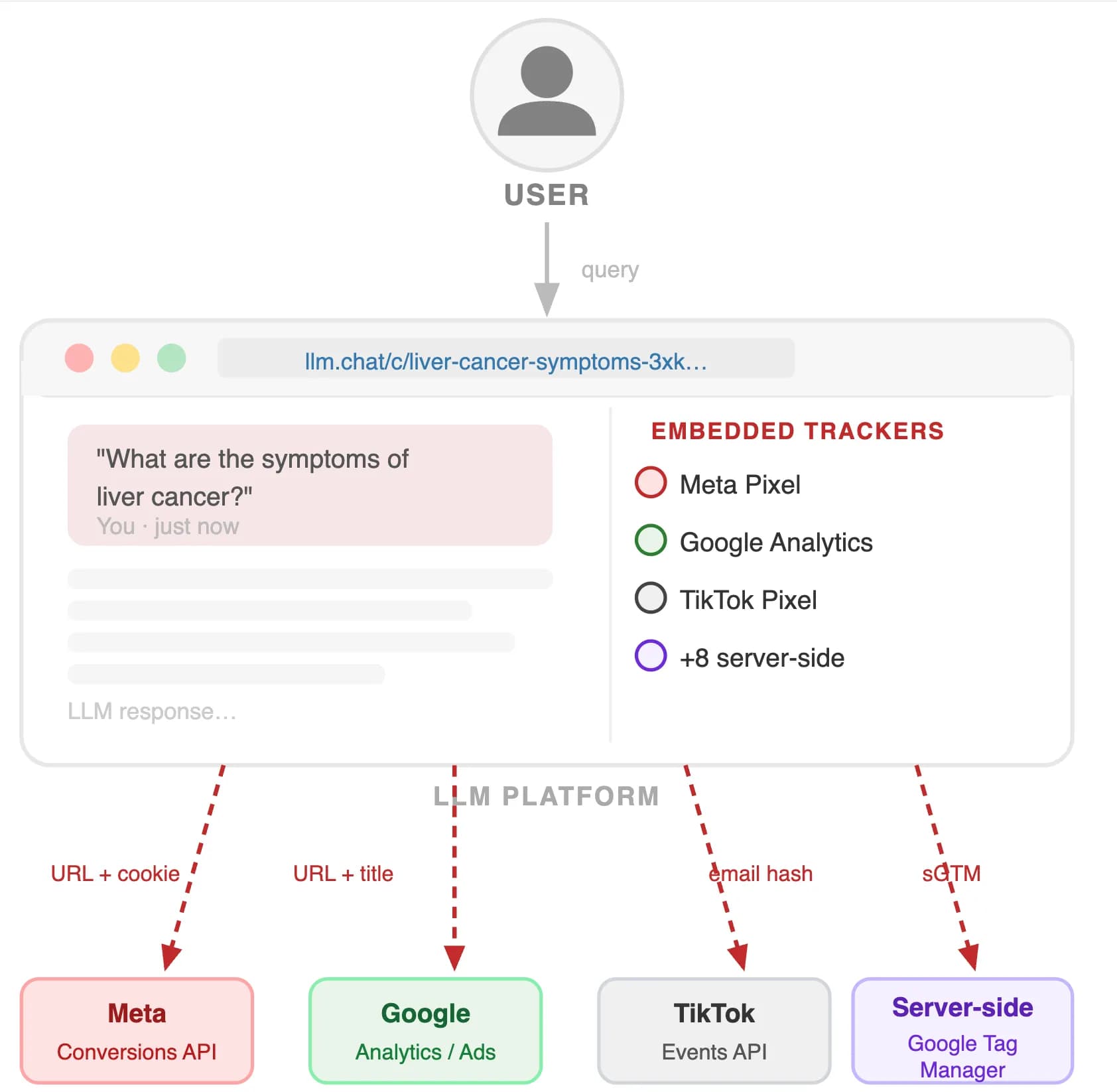

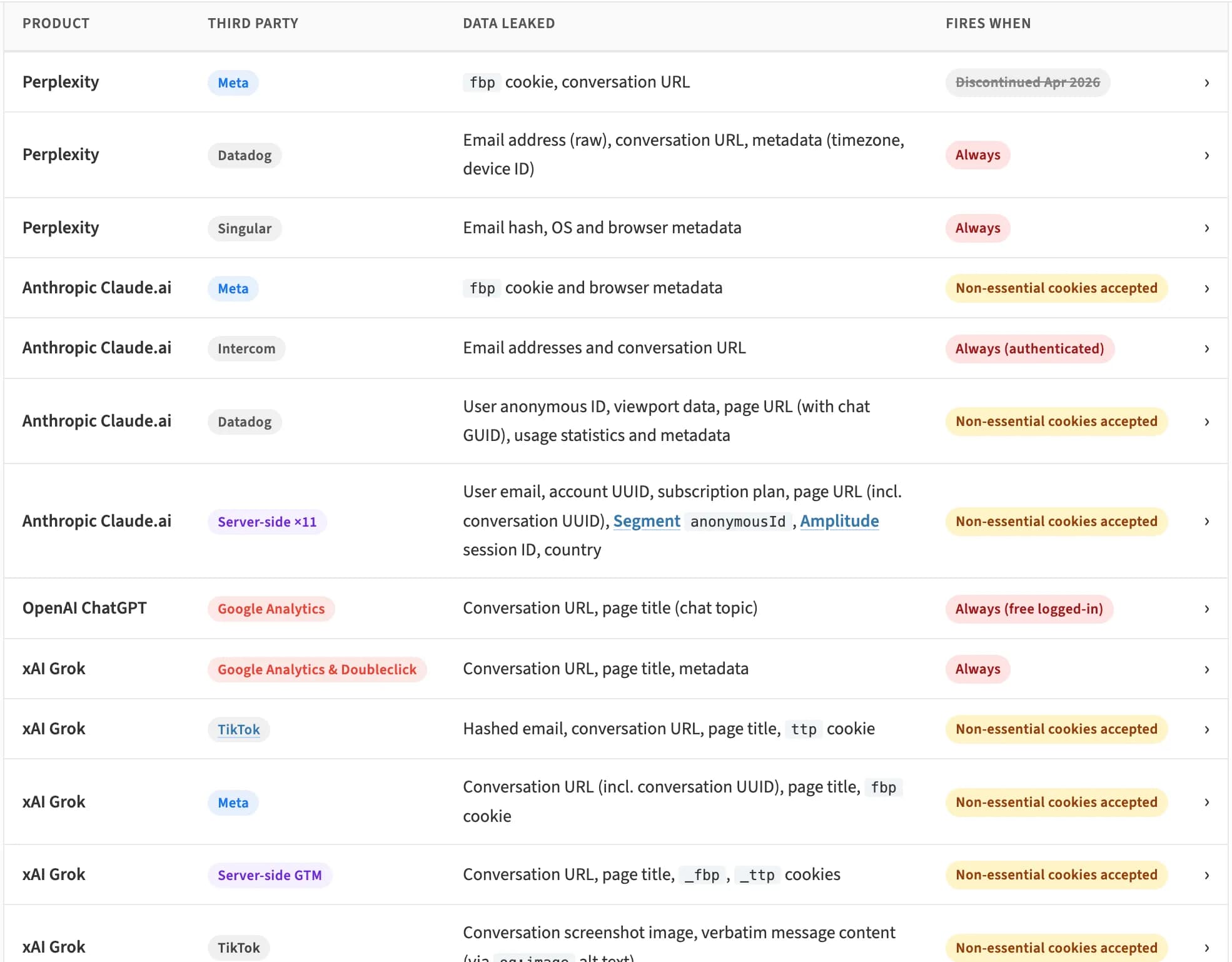

Researchers at IMDEA Networks Institute published findings on May 4 showing that all four of the biggest AI assistants—ChatGPT, Claude, Grok, and Perplexity—quietly share data with third-party advertising and analytics services, including Meta, Google, and TikTok. The project, called LeakyLM, identified more than 13 trackers embedded across these platforms. Zero of them are disclosed to users in plain language.

Image: IMDEA Networks Institute

Think of it this way: Every time you open a chat, invisible software tools embedded in the webpage phone home to ad networks—sending details about who you are, what page you're on, and sometimes even what you typed.

The most basic leak is your conversation URL—a web address that points to a specific chat. Sounds harmless, right? The problem is that several platforms make those URLs publicly accessible by default, meaning anyone who has the link can read your conversation without logging in. When those URLs are also sent to Meta or Google's ad systems, those companies gain the ability to access and read your chats.

"Leaking a URL is not just metadata—it can be equivalent to leaking the conversation itself," the researchers say.

Grok, Elon Musk's AI chatbot from xAI, is the most exposed. Guest conversations are public by default on the platform—no login required to read them. TikTok's tracker received not just URLs but verbatim message content through what's called Open Graph metadata, a standard used to generate preview images when you share a link. Basically, TikTok's system received a screenshot of your conversation.

The AI chatbots identified as leaking user data include ChatGPT, Claude, Grok, and Perplexity.

The sharing of data can lead to privacy violations, as user conversations may be accessed by third-party advertising and analytics services.

The researchers found over 13 third-party trackers embedded in the AI chatbots.

Grok is considered the worst offender because it makes guest conversations public by default and shares verbatim message content via TikTok's tracker.

Samson Mow defends Strategy's potential Bitcoin sales for shareholder protection.

IMF warns that AI tools are supercharging cyberattacks on the financial system.

Arthur Hayes argues altcoins like Zcash are vital due to AI privacy concerns.

Majority of Americans back the CLARITY Act for clearer crypto regulations, poll reveals.

CZ floats the idea of reviving Binance.US to enhance U.S. traders' access to global crypto liquidity.

21Shares has debuted the first U.S. ETF focused on Canton Coin, offering institutional access to its blockchain.

See every story in Crypto — including breaking news and analysis.

Image: IMDEA Networks Institute

Claude (Anthropic) and ChatGPT (OpenAI) have stronger access controls—your chats aren't public unless you choose to share them. But they still transmit conversation URLs and identifying data like advertising cookies to Meta and Google. For Claude, that data goes to 11 advertising platforms through Anthropic's own servers, not through the browser, which is why an ad blocker won't stop it.

Perplexity removed its Meta tracker last month.

The study acknowledges it hasn't proven that Meta or Google actually read anyone's chats. But the infrastructure to do so exists, and the data is being transmitted. "The studied LLMs offer privacy controls to limit conversation visibility, but may mislead users by implying stronger protections than are actually enforced," researchers argue. “While we do not yet have evidence that conversations are read by trackers, permalink dissemination and by extension the capability to read them exist, and therefore the potential risk.”

This isn't the first time AI platforms have faced scrutiny on privacy. Claude recently began requiring government ID verification for new subscribers—a move that drew backlash from the same privacy-conscious users who had switched from ChatGPT over surveillance concerns, as Decrypt reported last month.

For now, practical steps are limited. On Grok, restrict conversation visibility in settings and explicitly revoke any link you've already shared. On Claude, rejecting non-essential cookies at least disables the Meta Pixel. On Perplexity, set conversations to Private. On ChatGPT, rejecting cookies where possible reduces exposure, though Google Analytics still runs for free logged-in users.

If you want to go even deeper and be fully protected, our guide on AI Privacy may be a good resource to check.

The researchers plan to extend their analysis to Meta AI, Microsoft Copilot, and Google Gemini—which were excluded from this round because they operate as both AI providers and ad companies simultaneously, making the threat model more complicated.

The findings were submitted to Data Protection Authorities on April 13, 2026. xAI was notified on April 17. As of publication, no company has responded.